Introduction

Most AI today can answer questions. Agentic AI can take action.

That shift — from responding to executing — is what makes this moment important.

This shift isn’t hypothetical. Gartner predicts that 33% of enterprise software applications will include agentic AI by 2028, up from less than 1% in 2024—a signal that “agents” are moving from experimental add-ons to core product capability.

If you’ve been hearing the term “agentic AI” everywhere and wondering whether it’s just another buzzword, this guide will give you clarity. We’ll break it down simply, look at real examples, discuss risks (because they matter), and explore how businesses should approach it responsibly.

What Is Agentic AI?

Agentic AI refers to AI systems that can understand goals, plan steps, use tools, and take actions with limited supervision.

Instead of just generating content or answers, agentic AI works toward an outcome.

For example:

- Don’t just draft an email — send it.

- Don’t just suggest a report — pull the data, analyze it, and summarize findings.

- Don’t just recommend follow-ups — schedule them.

In simple terms, agentic AI doesn’t just generate ideas. It moves work forward.

In my 15+ years working with operations and technology teams, the biggest bottleneck has never been “lack of intelligence.” It’s been a follow-through. Work stalls between tools. Approvals sit in inboxes. Updates don’t get logged. Agentic AI is essentially an attempt to close that execution gap.

That’s the real promise.

The Evolution of Agentic AI: From Automation to Autonomous Systems

To understand where agentic AI is going, it helps to understand where it came from.

AI didn’t suddenly become “agentic.” It evolved.

Phase 1: Rule-Based Automation

- If X → Do Y

- Static workflows

- Breaks when inputs change

These systems were deterministic. No reasoning. No adaptability.

Phase 2: Machine Learning Optimization

- Predictive models

- Pattern recognition

- Decision support

ML could predict outcomes — but it still couldn’t execute multi-step workflows independently.

Phase 3: Generative AI

- Natural language understanding

- Content generation

- Conversational interfaces

This was a breakthrough.

But generative AI still required humans to:

- Decide what to do next

- Execute actions manually

- Connect outputs to systems

It enhanced thinking — not execution.

Phase 4: Agentic AI

Even so, most organizations are still early. In McKinsey’s 2025 global survey, 62% of respondents said their organizations are at least experimenting with AI agents—strong interest, but not full-scale maturity yet.

Now we see systems that:

- Interpret goals

- Plan workflows

- Use tools

- Execute actions

- Learn from outcomes

The shift is from: “What should I do?” to “Let me handle this.”

That’s the real evolution.

Agentic AI represents the transition from intelligent assistance to intelligent orchestration.

How Does Agentic AI Work?

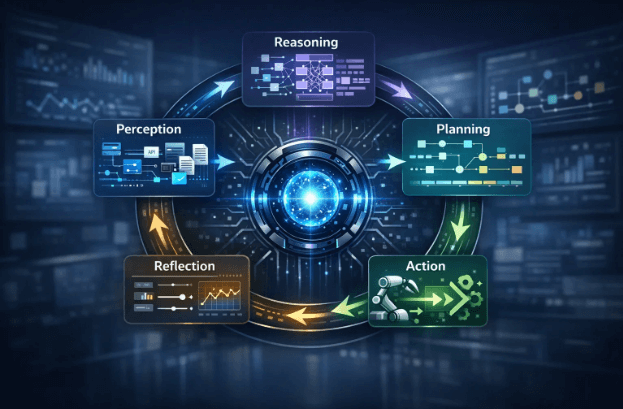

At a high level, agentic AI follows a loop:

Perception → Reasoning → Planning → Action → Reflection

But that simple description hides a lot of complexity.

If you really want to understand how agentic AI works — especially in business environments — you need to understand the layers beneath that loop.

Let’s break it down clearly.

Step 1: Perception — Understanding the Environment

Before an agent can act, it needs context.

Perception is about collecting signals from:

- Documents

- Databases

- APIs

- Emails

- Dashboards

- CRM/ERP systems

- User prompts

- Historical records

This is where many implementations quietly fail.

If the data is:

- Incomplete

- Outdated

- Inconsistent

- Poorly structured

Then the agent starts reasoning on weak foundations.

Think of perception as the agent’s sensory system.

No clarity → No intelligent action.

This isn’t just an AI problem—it’s a work problem. Microsoft’s Work Trend Index found that 62% of survey respondents say they struggle with too much time spent searching for information in their workday. Agentic AI can reduce that drag—but only when the underlying systems are organized enough for agents to retrieve the right signal reliably.

Step 2: Reasoning — Interpreting the Goal

This is where the language model (LLM) comes in.

The agent receives:

- A goal (“Prepare monthly performance summary”)

- Context (team data, KPIs, attendance logs, deadlines)

The reasoning engine determines:

- What does “performance summary” mean here?

- Which metrics matter?

- Who is the audience?

- What constraints exist?

- Are there missing inputs?

This is not just answering a question.

It’s interpreting intent.

That’s a major leap from traditional automation.

Step 3: Planning — Breaking the Goal Into Steps

This is where agentic AI starts to feel different.

Instead of directly generating output, it creates a plan.

For example, for “Prepare monthly performance summary,” it might:

- Pull productivity metrics

- Gather project completion data

- Identify anomalies

- Compare month-over-month trends

- Draft summary insights

- Route for approval

- Send report to stakeholders

Planning is what makes it agentic.

It decomposes objectives into executable tasks.

In more advanced systems, planning may involve:

- Branching logic (if X, then Y)

- Risk evaluation

- Conditional approvals

- Fallback strategies

That’s orchestration — not just response generation.

Step 4: Action — Executing Across Systems

This is where agentic AI moves from “intelligent assistant” to “operational participant.”

It can:

- Call APIs

- Update CRM records

- Trigger workflows

- Send emails

- Schedule meetings

- Generate dashboards

- Modify tickets

This happens through tool integrations.

Technically, this involves:

- API calls

- Function calling

- Workflow engines

- Secure connectors

- Identity-based permission systems

And this is where governance becomes critical.

Because once an AI system can act, its scope of impact expands significantly.

Step 5: Reflection — Evaluating and Adjusting

This is often overlooked.

Agentic AI systems don’t just execute once.

They evaluate:

- Did the action succeed?

- Was the response accepted?

- Did approval get rejected?

- Did new data change the context?

Based on feedback, they:

- Revise plans

- Retry actions

- Escalate to humans

- Adjust strategy

This reflection loop is what makes the system adaptive.

Without reflection, it’s just automation.

With reflection, it becomes iterative and self-correcting.

The 4 Core Layers of an Agentic AI System

To make this even clearer, think in terms of layers.

A real agentic architecture usually includes:

- The Brain (LLM)

- Interprets goals

- Reasons through context

- Generates plans

- Memory Layer

Two types:

- Short-term memory → current session context

- Long-term memory → historical decisions, preferences, patterns

Without memory, agents repeat mistakes or forget constraints.

- Tool Layer

This is what allows action.

Examples:

- CRM connectors

- ERP APIs

- Ticketing systems

- Productivity dashboards

- Financial software

Tools extend the agent’s reach beyond conversation.

- Guardrails and Governance Layer

This is the most underestimated layer.

It includes:

- Permission boundaries

- Role-based access

- Approval workflows

- Logging and audit trails

- Escalation rules

Without this layer, autonomy becomes liability.

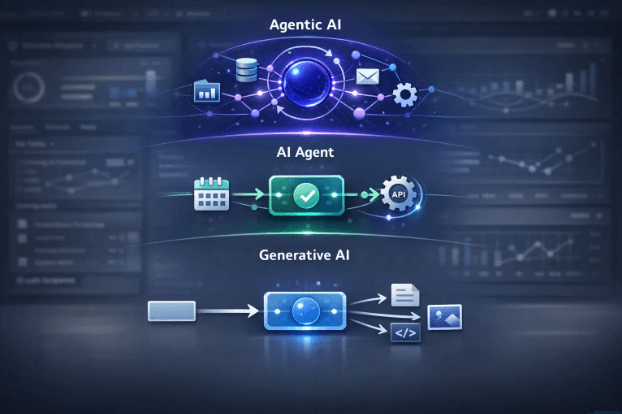

Agentic AI vs Generative AI vs AI Agents

This is where most of the confusion happens.

The terms sound similar. They’re often used interchangeably. And many vendors blur the lines intentionally.

Let’s simplify this in a practical way.

If you understand this section clearly, you’ll instantly spot whether something is truly agentic — or just a chatbot wearing a new label.

1. Agentic AI: It Orchestrates Toward an Outcome

Now we get to the real shift.

Agentic AI is not just one agent.

It’s a system that:

- Understands a broader objective

- Breaks it into sub-goals

- Coordinates tools or agents

- Executes multi-step plans

- Adjusts if something fails

- Works toward completion

This is outcome-driven autonomy.

Instead of: “Generate a report.”

It thinks:

- What data do I need?

- Which systems contain it?

- How do I clean and analyze it?

- Who should receive it?

- Do I need approval before sending?

And then it moves through those steps.

That’s the difference.

2. Generative AI: It Creates

Generative AI (GenAI) is what most people first experienced through tools like ChatGPT.

Its core capability is:

- Generate text

- Create images

- Write code

- Draft summaries

- Answer questions

It is reactive.

You give it a prompt. It produces an output. That’s it.

It does not:

- Take action on its own

- Connect to systems autonomously

- Track progress toward a goal

- Decide what to do next

It responds. It does not execute.

Example: “Write a follow-up email to a client.” → It writes the email. But you still have to send it.

3. AI Agents: It Performs a Task

An AI agent goes one step further.

It can:

- Take a specific action

- Use tools

- Follow a defined workflow

But its scope is usually limited.

Think of an AI agent as: A worker assigned to one well-defined job.

For example:

- A support bot that resets passwords

- A chatbot that books appointments

- A script that fetches and formats data

It has autonomy within a boundary.

But it doesn’t usually:

- Redefine goals

- Coordinate across multiple systems independently

- Adapt strategy if conditions change significantly

It performs. It doesn’t orchestrate.

Side-by-Side Comparison

Here’s a clearer breakdown:

| Feature | Generative AI | AI Agent | Agentic AI |

| Generates content | ✅ | Sometimes | Sometimes |

| Uses external tools | ❌ | ✅ | ✅ |

| Executes actions | ❌ | Limited | Yes |

| Plans multi-step workflows | ❌ | Rarely | ✅ |

| Adapts to changing context | Limited | Limited | Stronger |

| Goal-driven autonomy | ❌ | Task-bound | Outcome-driven |

| Coordinates multiple systems | ❌ | Usually no | Yes |

A Practical Analogy

Let’s use a simple business analogy.

Imagine you want to organize a client webinar.

- Generative AI writes the invite.

- AI Agent schedules the Zoom link.

- Agentic AI:

- Creates the invite

- Schedules the session

- Sends reminders

- Tracks registrations

- Notifies stakeholders

- Generates a post-event report

That’s orchestration. That’s the agency.

Agentic AI vs Traditional Automation

Another common confusion:

“Isn’t this just automation?”

Not exactly.

Traditional automation:

- Follows fixed rules

- Executes predefined steps

- Breaks when something unexpected happens

Agentic AI:

- Interprets context

- Decides dynamically

- Adjusts plans when inputs change

Automation runs scripts.

Agentic AI reasons before acting.

That’s a fundamental shift.

Agentic AI Use Cases by Industry

Agentic AI is not limited to one sector.

Its impact varies depending on operational complexity, regulatory constraints, and workflow structure.

Here’s how it plays out across industries.

Agentic AI in Finance

Use cases include:

- Autonomous reconciliation workflows

- Risk anomaly detection with escalation

- Regulatory reporting preparation

- Fraud investigation orchestration

In finance, the emphasis is on auditability, compliance, and structured approvals. While agentic AI focuses on orchestrating multi-step execution across systems, earlier advancements in generative AI in fintech primarily enhanced content generation, risk analysis summaries, and conversational interfaces.

The shift now is from generating financial insights to autonomously coordinating financial workflows — with governance and oversight built in.

Agentic AI reduces coordination overhead while operating within strict regulatory boundaries.

Agentic AI in Healthcare

Use cases include:

- Patient intake coordination

- Insurance claims processing

- Clinical documentation assistance

- Scheduling optimization

Healthcare environments demand tight human oversight. Agentic systems here support structured workflows — not independent medical decision-making — helping reduce administrative burden while maintaining safety.

Agentic AI in IT & DevOps

Use cases include:

- Incident triage and escalation

- Log correlation and root cause analysis

- Automated diagnostics

- Runbook execution with approval

In fast-moving infrastructure environments, delays often stem from coordination rather than technical limitations. Agentic AI shortens the path between detection and action.

Agentic AI in Software Development

Agentic AI in Software Development is one of the most advanced and rapidly evolving domains for agentic AI adoption.

Unlike coding assistants that respond to prompts, agentic systems in engineering environments can:

- Break down feature requirements into executable tasks

- Generate and refine code across multiple files

- Create and execute tests automatically

- Open pull requests

- Respond to review feedback

- Triage CI/CD failures

- Coordinate release workflows

The shift here is from “help me write code” to “help me deliver a completed feature.”

In modern engineering teams, agentic systems operate across the software development lifecycle — from requirements clarification to production support — while keeping humans in approval and architectural decision roles.

Agentic AI in HR & Workforce Operations

Use cases include:

- Policy compliance monitoring

- Hiring workflow coordination

- Performance reporting automation

- Employee documentation tracking

In workforce operations, agentic AI improves process consistency and reduces administrative friction — especially in multi-step workflows involving approvals and documentation.

What Agentic AI Is Good At

Agentic AI works best when:

- The outcome is clear

- The workflow involves multiple tools

- There are repetitive coordination steps

- Human decisions can be tiered (approve / reject / auto-execute)

- Manual follow-ups

- Context switching

- Administrative overhead

Where Agentic AI Fails (And Why That Matters)

Autonomy sounds powerful.

It’s also risky.

1. Errors Compound Across Steps

One incorrect decision in a multi-step workflow doesn’t just produce a wrong answer.

It produces a wrong outcome.

From experience: In real implementations, the danger isn’t that AI makes one mistake. It’s that it executes five steps confidently before anyone notices. Autonomy without visibility compounds errors faster than manual systems ever could.

2. Hallucinations Become Actions

If a chatbot hallucinates, it’s annoying.

If an agent hallucinated and executes, it’s an operational risk.

3. Permissions and Governance Gaps

If an agent can:

- Access financial systems

- Trigger approvals

- Modify records

Then governance isn’t optional — it’s foundational.

Agentic AI Risks and Governance

Before deploying agentic systems, organizations need:

- Tiered action rules

Suggest → Draft → Execute with approval → Auto-execute - Least-privilege access controls

- Clear audit trails

- Human-in-the-loop checkpoints

Start with bounded use cases.

Scale gradually.

Don’t give full autonomy on day one.

How to Implement Agentic AI (Step-by-Step)

Here’s the practical roadmap I recommend.

In my experience leading AI and automation rollouts across cross-functional teams, the projects that succeed start small, define boundaries early, and measure outcomes relentlessly.

Step 1: Choose a Low-Risk, High-Friction Use Case

Start where:

- The workflow is repetitive

- Mistakes are reversible

- Outcomes are measurable

Step 2: Define Allowed Actions

Decide clearly:

- What can it suggest?

- What can it draft?

- What requires approval?

- What can it execute automatically?

Step 3: Ensure Data Quality and Visibility

This is where most projects quietly fail.

Agentic systems depend on reliable signals.

If your underlying productivity data, task visibility, or operational metrics are unclear, your AI will automate confusion.

After 15+ years helping teams improve productivity systems, one thing is consistent: you cannot automate what you cannot measure clearly. Before introducing intelligent agents, organizations need clarity on where time goes, where delays occur, and what execution actually looks like.

Agentic AI amplifies whatever system you already have — good or bad.

How to Measure Whether Agentic AI Is Actually Working

Don’t just track “AI adoption.”

Track outcomes.

Measure:

- Cycle time reduction

- Escalation rate

- Error/override rate

- Human approval frequency

- Cost per completed workflow

- SLA improvements

If you can’t quantify improvement, you’re experimenting — not transforming.

The Missing Ingredient: Operational Visibility

There’s a critical truth many organizations overlook when adopting agentic AI:

Agentic AI does not create clarity. It acts on the clarity that already exists.

These systems depend on signals — workflow data, system logs, performance metrics, approval trails, and process definitions. If those signals are fragmented or unreliable, autonomy doesn’t solve the problem. It accelerates it.

Before scaling agentic AI, organizations need to ensure they have:

- Clear visibility into how work actually flows

- Defined ownership across systems and teams

- Reliable operational data

- Measurable performance indicators

- Transparent accountability structures

Without that foundation, agentic systems risk making fast decisions on incomplete information.

In practice, successful adoption isn’t just about deploying smarter models. It’s about strengthening the operational intelligence layer beneath them — the systems that provide clean data, structured workflows, and governance boundaries.

At EmergeAI Technologies, we approach agentic AI transformation from this foundation-first mindset. Autonomy is introduced only after visibility, measurement, and control mechanisms are in place. Because intelligent execution requires disciplined infrastructure.

Automation without observability creates chaos. Autonomy built on operational clarity creates leverage.

That distinction determines whether agentic AI becomes a competitive advantage — or a governance risk.

When Should You Not Use Agentic AI?

Avoid it when:

- The workflow changes daily

- The risk tolerance is low

- Data quality is inconsistent

- Governance structures are immature

Agentic AI is powerful. But power without discipline creates fragility.

Final Thoughts

Agentic AI represents a shift from “thinking machines” to “acting systems.”

That’s exciting.

It’s also serious.

Used well, it reduces coordination drag and unlocks execution speed.

Used poorly, it compounds errors and obscures accountability.

Start small. Govern tightly. Measure outcomes.

And before automating decisions, make sure you can see reality clearly.

Because the smartest agent in the world still depends on the quality of the system it’s acting within.

FAQs

Agentic AI is an artificial intelligence system that can understand a goal, create a plan, use tools, and take actions to complete tasks with minimal human supervision. Unlike traditional AI that only generates responses, agentic AI works toward achieving outcomes.

Generative AI creates content such as text, images, or code based on prompts. Agentic AI goes further — it can plan steps, make decisions, and execute actions across systems to achieve a defined objective. Generative AI answers; agentic AI acts.

Not exactly. An AI agent is usually designed to perform a specific task. Agentic AI refers to a broader system that may coordinate multiple agents, tools, and workflows to accomplish complex, multi-step goals autonomously.

Agentic AI typically follows a loop:

- Understand the goal

- Gather relevant data

- Plan the required steps

- Execute actions using tools or APIs

- Evaluate results and adjust

This perception → planning → action → reflection cycle allows it to operate with autonomy.

Examples include:

- AI systems that resolve customer service tickets end-to-end

- IT support agents that diagnose issues and trigger remediation workflows

- Finance automation agents that reconcile data and flag discrepancies

- Workflow agents that schedule meetings, send updates, and track follow-ups

Key benefits include:

- Faster execution across systems

- Reduced manual coordination

- Improved operational efficiency

- 24/7 task management

- Lower administrative overhead

It’s particularly useful for repetitive, multi-step workflows.

The main risks include:

- Errors compounding across multiple automated steps

- Hallucinations leading to incorrect actions

- Security and permission misuse

- Lack of visibility into decision-making

- Governance and compliance challenges

That’s why guardrails and human oversight are critical.

No. Agentic AI is designed to augment human work, not replace it. It can handle repetitive and coordination-heavy tasks, but strategic decision-making, judgment, and accountability still require human oversight.

It can be safe if implemented with:

- Clear access controls

- Tiered approval systems

- Audit trails

- Human-in-the-loop safeguards

- Continuous monitoring

Without governance, autonomy increases operational risk.

A business should consider agentic AI when:

- Workflows are repetitive and cross multiple tools

- Delays occur due to manual follow-ups

- Execution gaps reduce productivity

- Clear success metrics can be defined

It works best when combined with strong operational visibility and measurable outcomes.